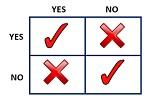

Contrary to parametric tests, nonparametric tests do not make assumptions about the parameters of the probability distribution and are considered to be distribution-free tests. Nonparametric tests are less sensitive and should not be used in place of parametric tests if the data sets do uphold the conditions required for parametric tests. Parametric tests yield more accurate results.

As with parametric tests, nonparametric tests have the same overall objectives of estimating population parameters and testing hypotheses. However, nonparametric tests are less powerful compared to parametric tests. Parametric tests are more likely to reject a null hypothesis which is false, rather than conform to making an error. Therefore, nonparametric tests should only be used when the assumptions for parametric tests are violated.

Nonparametric tests can be used for interval or ratio data sets, as is the requirement for parametric tests. However, often these tests use ranked data, ordinal data, or nominal data, values measured as frequency counts. Furthermore, unlike parametric tests, outliers do not negatively influence the data since the variance values of the populations do not need to be equal.

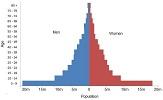

Additionally, nonparametric tests compare median values and not mean values. Since parametric tests require the data to be normally distributed, the mean and median values are equal. This is not the case for data used in nonparametric tests and this is also why outliers do not impede upon the use of nonparametric tests.

Nonparametric tests are very effective to use especially when a researcher does not know if their data set is normally distributed. There are nonparametric tests which can be used in place of parametric tests when a researcher wants to know whether a relationship exists between data sets or whether there is a difference between two or more data sets. Some examples of nonparametric tests include the Mann-Whitney U test and the McNemar’s test.

References:

Image Credit: Wikimedia Commons

© BrainMass Inc. brainmass.com June 30, 2024, 2:34 am ad1c9bdddf