Error control, through detection and correction is what allows us to have a reliable delivery of digital data regardless of the quality of the communication channel. Noise, system bugs and other failures can threaten the integrity of information exchange in its journey from source to destination receiver, but computer scientists have developed rigorous methods of correcting these faults and checking that the digital signal arrives as intended.

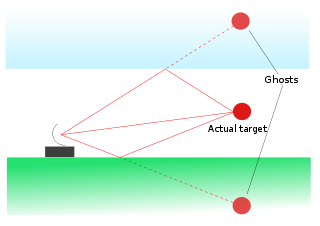

Example of artifact error in natural sciences which can affect signals

The first component of error control is the detection of faults in transmission. The algorithms for error detection center around verifying that check bits added to the end of meaningful data are logically consistent with what is expected. These bits are redundant in that they are not necessary for the content of the message, but they are essential to error detection because any unexpected change in them signals a compromised integrity in the signal. The correction component then has to do with seeing which of the bits changed and performing simple operations based on elimination reasoning to reinstate the original, error-free state of the signal.

These algorithms can by either systematic or non-systematic. In the former instance, the check bits attached are derived from the data by some deterministic algorithm. Using these, errors are corrected via either ARQ (automatic repeat request) which is simply noting that there is an issue and requesting that the signal be sent again, or FEC (forward error correction) where allowances are made for less critical errors on the receiving end. The two approaches may also be combined in the case of minor errors so that they can be straightened out without the need to resend all the data, while major errors are dealt with in that way. This combination is known as automatic repeat-request.

© BrainMass Inc. brainmass.com June 30, 2024, 10:12 am ad1c9bdddf